Second Self

An augmented mirror platform that overlays interactive applications on a live reflection using depth sensing, pose estimation, and spatial alignment.

Platform for recreational, medical, and educational augmented mirror experiences

Overview

Turning the mirror into a responsive mixed-reality platform for learning and movement.

- Uses a one-way mirror, screen, depth camera, and laptop to create an interactive augmented mirror.

- Relies on Intel D435 sensing and Mediapipe pose estimation to align overlays with the user.

- Supports application modules for menu navigation, sign language learning, and dance practice.

- Treats the mirror as a reusable platform rather than a single-purpose app.

Platform concept

Second Self proposes an augmented mirror that can host multiple applications instead of only showing lightweight dashboard widgets. The mirror blends a live reflection with digital overlays that respond to body position and movement.

The ambition is broad but coherent: recreational, medical, and educational modules can all live on top of the same sensing and rendering backbone.

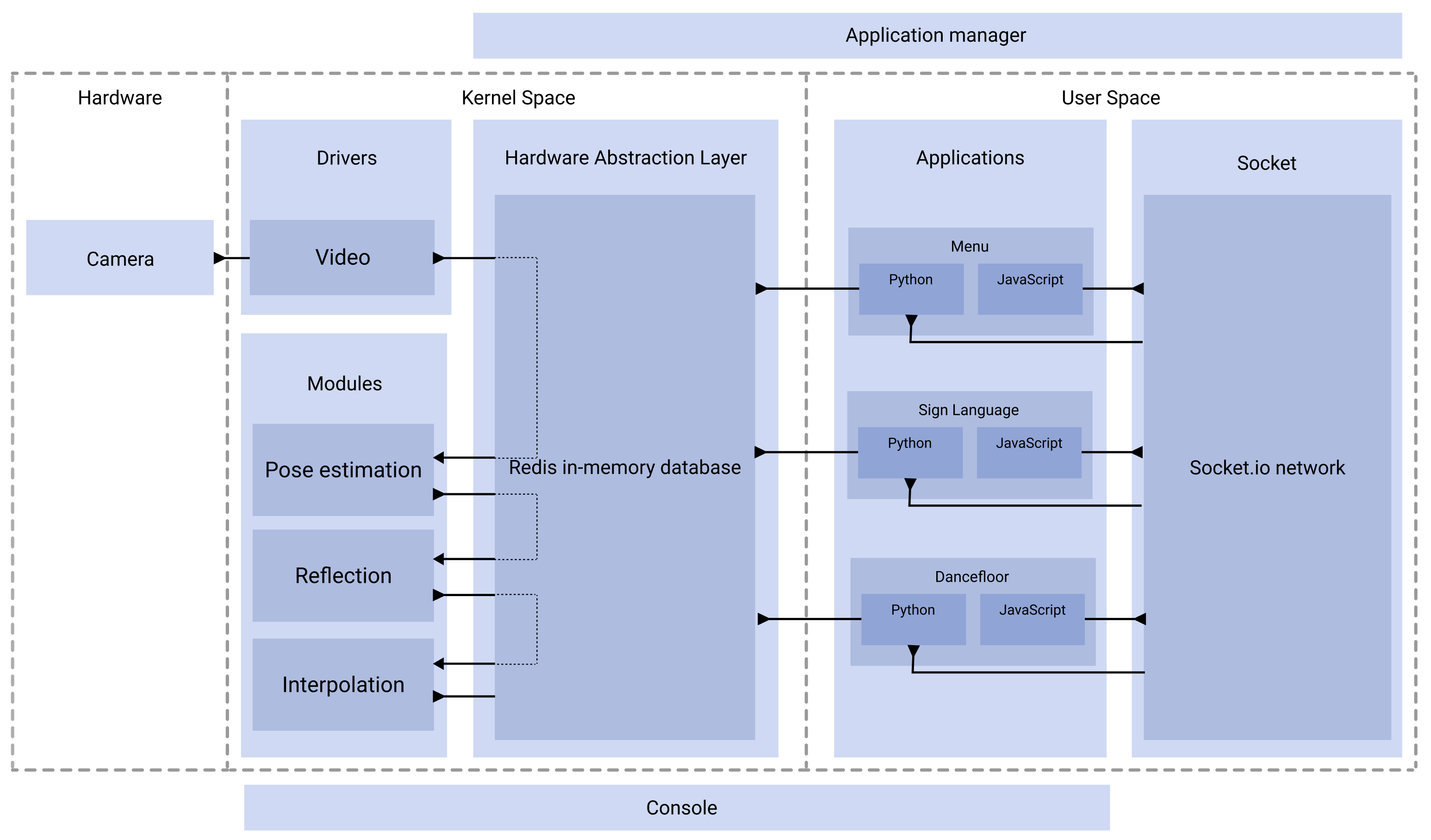

Sensing and interaction pipeline

The platform uses an Intel D435 depth camera to capture the environment and the user, then runs pose estimation to recover body landmarks. Additional modules project those coordinates into reflection space so digital content lines up with the mirror image.

That alignment step is the key design move: it makes the mirror feel less like a screen placed behind glass and more like an interface that lives directly on the body.

Application modules

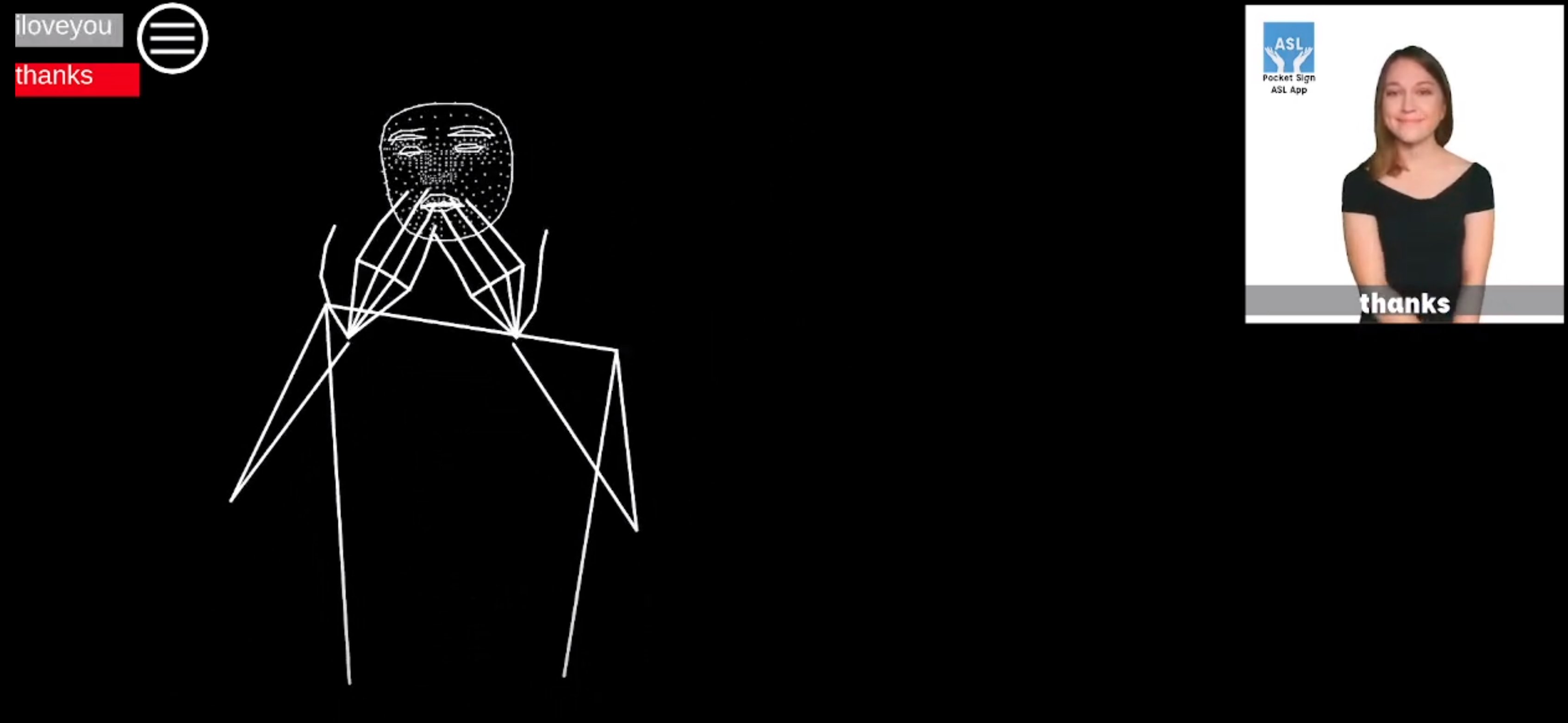

The PDF documents three compelling example applications. A menu lets the user launch modules with dwell-based gesture input. A sign-language module uses pose-based recognition to help people learn a vocabulary of signs. A dance module compares the user against a prerecorded performer and gives immediate feedback.

The folder also includes animated assets for piano and sign experiences, which underline the platform’s focus on guided embodied learning.